ASR Benchmark Construction Pipeline

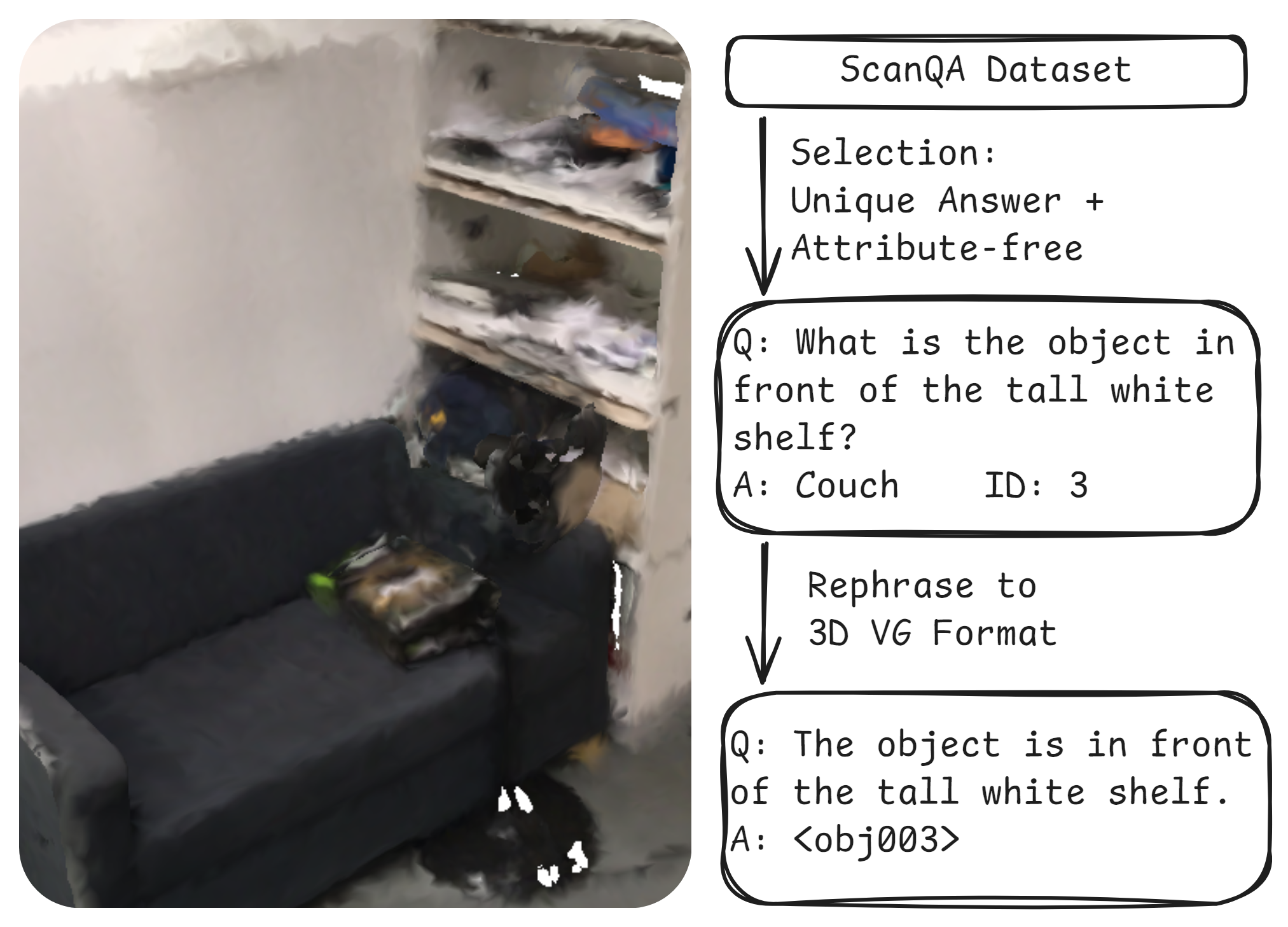

Figure 1: The construction pipeline of the ASR benchmark.

Figure 2: Examples of questions that will be excluded when filtering on the ScanQA dataset.

① The question "What is the object surrounding the table?" should be excluded as there are multiple correct answers.

② The question "What is the grey object next to the table under the TV?" should be excluded as the question tells that the target object is grey and the model can simply identify the target object by color without spatial reasoning.